Global development organizations jump at the opportunity to showcase their best projects, to point to the lives they have improved, to the positive change they have brought about through months — or years — of hard work in communities around the world. And rightly so. But when it comes to discussing impact and outcomes, the aid industry can be less inclined to ask a simple, but crucial, question about projects that didn’t go well: “What went wrong?”

“What Went Wrong?” is a citizen journalism project that focuses a critical lens on failed foreign aid interventions — whether they are stalled, unfinished, broken, insufficient, unusable, or otherwise unwanted. The project does this by inverting the traditional power dynamic and putting impact evaluation in the hands of the people directly affected by aid interventions: the recipients. The team spent six months collecting 142 citizen reports from aid recipients across Kenya and investigating the projects that these reports unearthed.

Devex has collaborated with the team behind What Went Wrong? to produce six investigative stories exploring why some of these projects failed to deliver.

These six articles aim to help shed light on the wide array of reasons aid projects go off track, while showing the potential benefits of putting people at the center of the conversation about what works and what doesn’t.

Why focus on failure

In a sector that tends to reward good news with more funding, aid organizations can be reluctant to admit a project’s shortcomings — or, even worse, a project’s failure. Those who live and work in communities where projects operate are often more willing to share this information and evidence. Digging into community stories provides an opportunity to examine mistakes, identify gaps, and learn from incidents of failure that seldom receive attention in year-end reports and promotional material.

The purpose of What Went Wrong? is not to point fingers; these investigations show that “failure” is complicated. Problems occurred for a variety of reasons in the projects surveyed: Terrorist threats forced aid workers out of a region; criminals sabotaged existing interventions to establish a black market; funding and responsibility gaps derailed project transitions from NGOs to local government.

Read what went wrong: Gender-based violence hotline

Some of these problems may be unavoidable, but What Went Wrong? also found a consistent failure to clearly communicate the status of aid projects to members of the communities where they were being implemented.

In addition, many issues raised by those community members were previously unknown to the organizations in charge.

How the project works

In Kenya, community members submitted reports to a mobile survey system created on the Echo Mobile platform with support from Code for Africa’s ImpactAfrica initiative. To submit a report, citizens called or texted and then answered a series of questions about an aid project in their area. The report was then vetted by a team of journalists and shared via social media with the NGOs or foundations responsible for the projects. Twenty-two projects were investigated further through in-depth follow-up reporting by What Went Wrong?, eight of which are documented in the six stories featured on Devex.

Click for a closer view.

Click for a closer view.

Read what went wrong: Water, electricity, and cartels

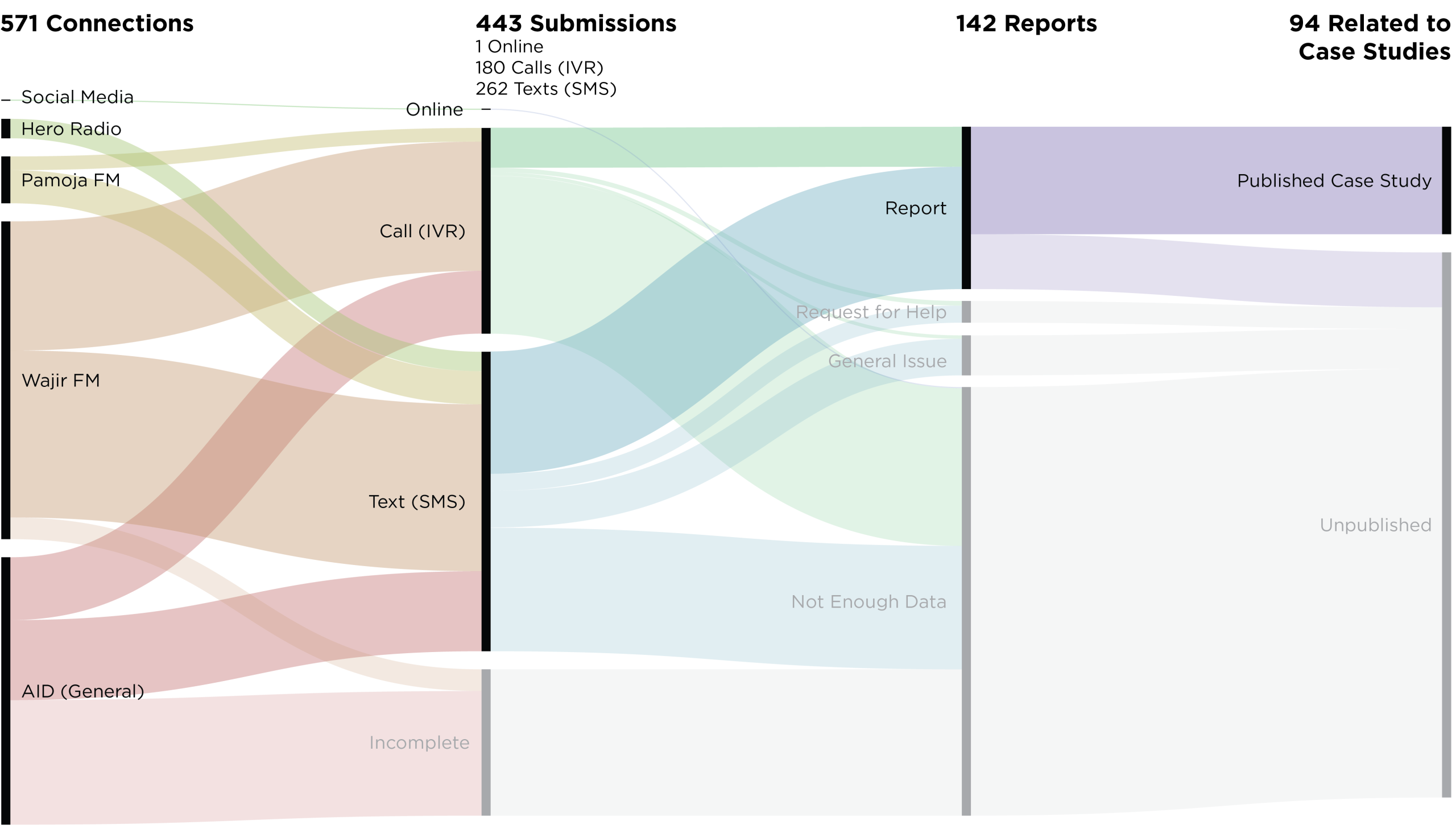

The survey was promoted on three community radio stations in Kenyan communities representing a diverse mix of urban and rural areas over the course of two months — Hero Radio in Nakuru County, Pamoja FM in the Kibera neighborhood of Nairobi, and Wajir FM in Wajir County. Each station held a weekly segment about aid for the duration of the campaign to share updates and invite callers to discuss specific projects in more detail.

Over eight weeks, What Went Wrong? received 443 submissions: 262 via text message; 180 over voice calls or interactive voice response; and one from an online survey. As submissions came in, the team of journalists worked to verify the reports and link them with specific aid projects.

Click for a closer view.

Click for a closer view.

Vetting these reports was difficult as the quality of information submitted was variable. While some reports included details from subcounty to donors, and even specific individuals involved in the project, others included only a few words, or simply an acronym.

In reports such as these, it was difficult to distinguish acronyms from simple typos, and, often, the team had to combine multiple reports to understand which project was being referred. Of 571 connections, 142 (25 percent) were complete enough to be considered “usable” — this number excluded submissions that were not in reference to a specific aid project, such as requests for help or general complains about conditions in the community.

Read what went wrong: Cash transfer information gaps

The survey system was also available online and promoted over social media, yet over the course of the two-month campaign, only one online report was submitted. This was particularly surprising as there was significant engagement with the project on other social media channels — one Instagram post promoting the project, from the Everyday Africa account, received over 4,000 likes. While internet access is on the rise across most parts of Africa, one possible explanation for the lack of online submissions is that mobile is still the preferred means of communication for the target users.

What was reported?

From the 142 reports, the team identified 33 unique projects in six different counties. Journalists followed up with in-depth investigations of 22 projects, eight of which are featured in this six-part series. The projects reported fell into almost every sector of the aid industry, including health, education, gender equality, housing, and climate resilience. Eighty-two out of 142 reports — 58 percent — submitted were related to cash transfer projects.

Read what went wrong: Finding uses for failed restrooms

A need for better communication

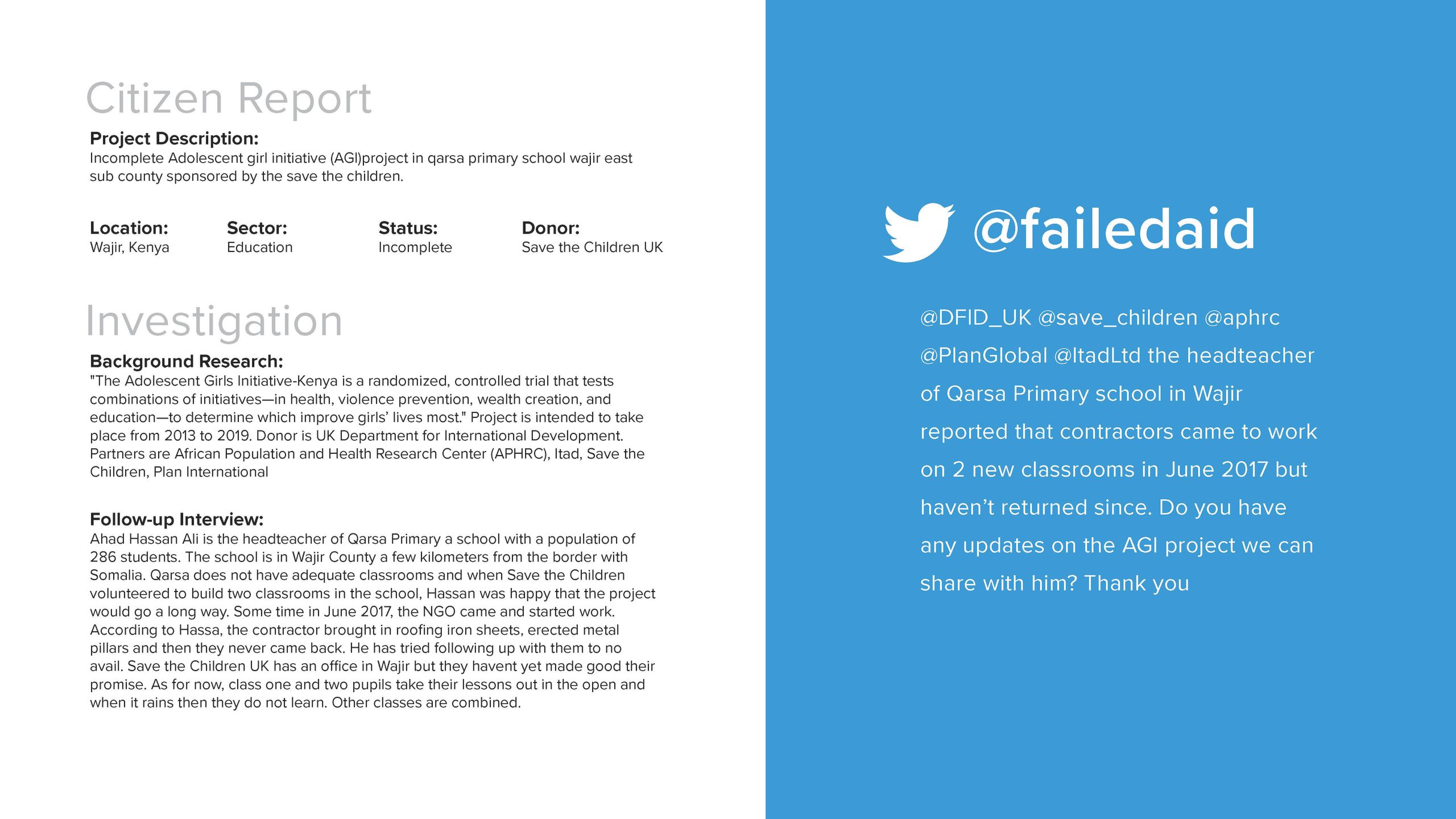

One of the biggest challenges for the What Went Wrong? team was crafting tweets of citizen reports that were specific, actionable, and accurate — operating off a survey submission as scant as “Wajir worl bank project [sic],” for example. There were no established best practices for how citizens’ reports should be shared, so the team had to strike a balance between drawing attention to recipients’ concerns without being accusatory or reactionary.

Click for a closer view and see the original tweets here.

Click for a closer view and see the original tweets here.

The responses from NGOs, the World Bank, and U.N. organizations to the What Went Wrong? tweets were limited. Some simply did not respond. Others replied with employee email addresses to contact for further information — moving the conversation out of the public sphere and into private channels. While it was difficult for What Went Wrong? journalists to start a conversation with many of these organization over social media, one could imagine that for the average aid recipient it is even more challenging to connect with the proper parties when seeking additional information or expressing concern.

In both survey submissions and in-person conversations during follow-up reporting, recipients reported remembering who came to their community and what was promised — whether that was clean water, a new roof for a school, or monthly cash transfers. But what remained unclear to the recipients was why the irrigation system was never finished, why the roof was left unbuilt, why cash payments stopped coming — and who to get in contact with to get answers.

Read what went wrong: Still waiting for housing

A majority of projects reviewed by What Went Wrong? were not failures, but instead large, multiyear initiatives that had not yet been completed. Time and again, community members reported a lack of communication from project implementing partners as plans changed, timelines shifted, or funding was cut. This sense of waiting often led to frustration, if not widespread demoralization. What Went Wrong?’s survey system revealed extensive gaps in knowledge on the ground that generated confusion, disillusionment, and resentment among the very people the projects were meant to serve and benefit.

What's next

For the next iteration of the survey system, the What Went Wrong? team is looking to create a simplified, low-cost version that can exist in a more ongoing way. This version will be online and scalable to other countries, specifically tailored to local journalists and community radio hosts.

The team worked with local journalists and radio journalists in Kibera, Nakuru, and Wajir. These journalists — particularly Nurdin Elmoge of Wajir FM and Philip Muhatia of Pamoja FM — were foundational to the success of the first pilot, as well as this Devex series; some of the most compelling survey submission came from local radio partners. Local journalists were not only knowledgeable of aid interventions in their area, but attuned to the community’s opinions of projects and aid organizations.

Read what went wrong: Nutrition distribution bottlenecks

By creating a system that aggregates the reports of local journalists and amplifies them to an international level, What Went Wrong? aims to improve accountability regionally, with implementation teams on the ground, and globally, by reaching major donors and foundations. Many of the same challenges of coalition building and outreach will persist, but a more targeted survey system will help streamline the report collection process — leading to more projects identified, increased visibility, and thus more pressure for accountability.

By continuing to document "failed" projects from the local perspective, the What Went Wrong? team hopes to further the development industry’s understanding of the confluence of reasons that aid projects can go wrong, while highlighting the value and potential impact of direct and ongoing communication with the community members aid organizations are working to serve.

For more information about What Went Wrong? email info@whatwentwrong.foundation and follow @failedaid

Story and graphics: Joe Wheeler

Production: Delia Behr

Photography: Peter DiCampo

Case studies: Anthony Langat

Editors: Michael Igoe, Deborah Charles, Delia Behr, Honesty Pern, Anne Paisley

The What Went Wrong? project team would like to thank Code for Africa’s Impact Africa grant for supporting the technology portion of the project, The Pulitzer Center on Crisis Reporting for supporting follow-up reporting on the ground, and the Magnum Foundation and Brown Institute for Media Innovation for supporting the early iteration of the reporting system.